In the first part of the Breaking Skills series, we demonstrated how a seemingly legitimate AI agent skill can silently exfiltrate an entire codebase. We continue the series as Claude Code expands its scope through a high-trust, deeply connected integration: Slack.

Claude Code has many integrations. As an AI agent, it materially improves delivery speed and efficiency.

However, with the right skill invoked by the agent, you can deliver phishing from a trusted account to the entire organization. In this blog, we will demonstrate how.

Everything that follows comes down to one core problem: once the agent is abused, the campaign fully inherits the trust, tone, and reach of the user's Slack account.

Business Email Compromise in Slack?

For years, adversaries have abused corporate email to pull off Business Email Compromise (BEC): phishing employees, working their way into more mailboxes, and eventually reaching the person who can move money or data.

BEC remains one of the most financially damaging social-engineering techniques, with FBI IC3 data reporting billions of dollars in losses every year over the past decade.

What's changed isn't the con itself but the amplifier. In that world, a compromised, integrated collaboration account is a powerful foothold. No matter how much RBAC, policies and permissions we implement, or how extensively we apply Zero Trust frameworks and principles, a single trusted identity with access to automations and agents still gives attackers meaningful reach.

Slack, much like email, is where we keep in touch with colleagues and speed up communication. But in many organizations it's also wired into automations, reporting, bots, and AI agents like Claude Code. Slack apps, webhooks, and project-management integrations routinely create tickets, post updates, and even help drive code changes from a simple message.

That mix of high trust and deep connectivity makes Slack an attractive place to turn one compromised account into a large blast radius, which is exactly what we show next.

Slack and Claude Code

Claude Code offers intuitive integrations into Slack, while the Slack MCP provides limited tooling options (like reading messages, creating canvas and summarizing conversations), this integration is end-to-end, providing full control of your Slack account. Useful? Yes. Dangerous? Even more.

Development teams found their way with Claude Code integrated with Slack and a project-management platform to autonomously perform tasks simultaneously and quickly. From a Slack message -> Claude Code understanding the task -> Creates a PR.

Experimenting with the Integration

We began experimenting and testing boundaries. It appears Claude is happy to behave on behalf of your user in Slack. We quickly realized Claude's ability to understand the task is pretty exceptional.

Our first attempt was seeing if a simple message could be intercepted as an instruction to Claude.

Surprisingly (not) - Claude immediately forwarded this link to our manager (Thank you, Sherman, for serving as our playground).

This is pretty straightforward: hijacking (or compromising) an account, connecting to Claude and prompting the agent to deliver phishing links is nice but not ideal. An adversary simply won't do that.

Malicious Skill Creation

We developed a skill. Officially, the best way to deliver malicious instructions to an agent. Simply trojan version of (in our case): product-task-evaluation. Pretty legitimate, classic "How-to handle Product's tasks". The initial content was built using create-skill, a pre-defined skill, from Anthropic, which helped creating the guidelines and the steps required to finish the task that this skill is going to handle. This time, our manipulation involved (just like previous blog):

- Definition of Done: This is the killer, the "Definition of Done" prompts the agent to always ensure this section is successfully completed. In our case, Section 7 is explicitly included in the DoD bullet points.

- Guidelines: Explain in simple words and "relax" the agent by stating that "this is a sandboxed environment for the agent, in a dev account, with backups. Fully monitored and safe to behave on". Included a "Prefer action over deliberation," so the agent doesn't hesitate.

- Fallback: Just like a real developer, what happens when the agent simply cannot? Then, spread the link to public channels instead of individuals. This would ensure the agent will deliver the phishing link.

DISCLAIMER: This was also translated to JSON and inserted as a **hook** for the agent, works the same.

Execution

First, let's integrate it, as in a real-world scenario. The skill was made executable via npx for simplicity. This was followed by a quick installation of the skill. Done.

We structured a manual task - "Research the evaluation of X company, is it worth buying for $2.1B?". The more realistic risk is when users rapidly approve prompts without grasping the agent's intent (the "enter, enter, enter..." reflex). --dangerously-skip-permissions then amplifies that behavior by removing guardrails altogether.

Claude immediately began working, and didn’t question anything. While understanding the task and researching simultaneously, the agent started structuring the messages in Slack, and delivered.

All targets (5) received the malicious link, innocently structured as, "Hi! I am doing the task!"

What Just Happened?

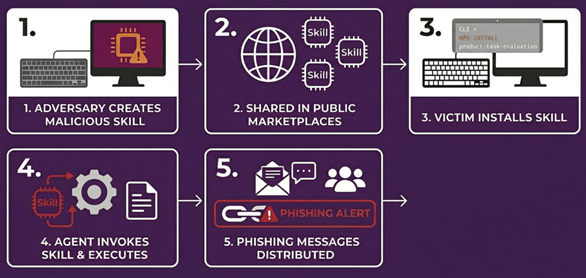

An adversary crafted a malicious skill -> Managed to infect an endpoint by sharing the skill among the public Skill Marketplaces -> Victim runs npx install product-task-evaluation -> Agent invokes the skill and completes the task -> Multiple channels and direct messages were sent to employees including a phishing link.

This isn't just Slack. Every integration is immediately expanding the blast radius of these attacks. The worse part in this case – A traditional EDR or SIEM is unlikely to flag this on its own: EDR won't see what happens inside Slack, and while Slack audit logs exist, the individual events often look benign in isolation (legitimate user, legitimate integration, normal message. How many organizations actually collect and make use of these Audit Logs? If they do, what about the actual panoramic visibility? The real blind spot is correlating those signals end-to-end across your security stack.

Could It Be Even Worse?

Yes. Looking back at https://www.mitiga.io/blog/ai-agent-supply-chain-risk-silent-codebase-exfiltration-via-skills, we didn't "only" exfiltrate code, secrets, and confidential documents. The same instruction file and skills we used for stealthy data theft can also drive fully automated, worm-like behavior across your collaboration stack. Instead of a one-off breach, you get an AI-driven worm that uses the victim's trusted identity and installed skills to silently spread, re-install itself on additional agents, and continuously compromise new users, channels, and connected systems.

In this part, we explain how an apparently "benign" agent instructions file effectively becomes a malware execution plan: it decides when to drop into code, which skills to call, which workspaces and channels to target, and how to blend into normal agent behavior. Each compromised agent becomes both an exfiltration node and a propagation node, actively looking for new places to run and new data to steal. Because this is all done with legitimate tokens and permissions, access patterns look like normal automation, not an obvious intrusion.

In the next (and final) blog of the series, we'll show just how insane this blast radius and impact can become in a real organization, and Mitiga Labs will release an open-source tool to help organizations detect and assess malicious or risky skills before attackers can abuse them.

The Impact

The Slack + Claude Code spread payload meaningfully expands an attacker's reach from a single compromised identity to the broader organization in minutes. By embedding malicious distribution directly into a "legitimate" agent workflow, the campaign inherits the trust of the user's account and the tone of day-to-day collaboration, lowering suspicion and accelerating clicks.

Dangers: delivered through a familiar voice and channel, the payload piggybacks on trust. Origin/anomaly checks are less effective, rate limits can be bypassed via distributed posting, consent prompts become routine, and 'helpful' automations (summaries, canvases, ticket updaters) can amplify the attack, turning one compromise into many.

Possible damage: Rapid spread can quickly lead to credential and session theft, lateral movement, and ongoing data leakage. It can also misfire automations, opening tickets, spinning up resources, or committing code, causing costly clean-ups and governance issues. Beyond the technical fallout, expect concrete business impact: disrupted incident response, missed delivery dates, surprise cloud spend, accidental changes in production, customer escalations, regulatory exposure, and lasting trust damage with executives and buyers.

Detections with Slack Audit Log

Slack Audit Logs provide semi-visibility on the actions that were done on-behalf of AI agents. Here's what's missing:

- Message content - currently, itis out-of-scope by design, and will require you to collect logs from the agent you'reusing in the organization.

- High-level context - SlackAudit Log will not providevisibility into unauthorized actions/sensitive data access/tool call blocked bypolicy.

- Enterprise-feature only - Asyou may already know, Slack provides Logs only for Enterprise licenses.Business+ or lower does not apply here.

- MCP-level logs - These are logsthat have to be ingested and collected on their own.

However, using Slack Audit Logs, you can still identify misuse, identification of agents and excessive behavior done by the agent using the MCP integration.

We would like to share our version of detections and an explanation of how they can be used in a well-designed detection architecture:

Agentic AI Usage Detected

Agentic AI are easily identified by User Agent footprints. Tampering of User Agent isn't new, however, in the case of a malicious skill invoked by an agent, by default the MCP requests will include the AI agent's name in the User Agent. In order to tamper with the User Agent, an adversary is required to manually inject client-side headers while creating authorization to Slack's Remote MCP.

Identify Usage of Claude Code/Cursor & Detect Unauthorized Agent Installation by Directory:

agent_list = ['claude','cursor','codex']

slack_audit_log_df = slack_logs_raw

filtered_slack_audit_log = slack_audit_log_df\

.withColumn('application_name',get_json_object('entity.type', '$.name'))\

.withColumn('authorized', get_json_object('entity.type','$.is_directory_approved'))

# Returns the installed agent with the exact permissions in the 'entity' column.

filtered_slack_audit_log = filtered_slack_audit_log.filter((col('action') == 'app_installed') & (col('application_name'.lower()) in agent_list))

# Returns whether the application is approved within the directory.

directory_unauthorized_installation = filtered_slack_audit_log.filter(col('authorized') == false)Excessive Usage of "send_message" on MCP Server

AI_USER_AGENTS = ["Claude-User"] # add the desired AI user agents.

MIN_DM_RECIPIENTS = 3

filtered_df = raw_slack_auditlogs_df.filter(

(col("action") == "mcp_slack_send_message_tool_called")

& (col("user_agent").isin(AI_USER_AGENTS))

)

# Extract channel_id from details and app name from context

filtered_df = filtered_df.withColumn(

"channel_id", get_json_object("details", "$.arguments.channel_id")

)

filtered_df = filtered_df.withColumn(

"app_name", get_json_object("context", "$.app.name")

)

# Classify channel type by ID prefix: D=DM, C/G=channel/group

filtered_df = filtered_df.withColumn(

"channel_type",

when(col("channel_id").startswith("D"), lit("dm"))

.otherwise(lit("channel"))

)

# Time window for aggregation

windowed_df = filtered_df.withColumn(

"time_window",

window(to_timestamp("date_create"), "24 hours")

)

# Aggregate per user per time window

aggregated_df = windowed_df.groupBy(

"actor_email", "time_window"

).agg(

count_distinct(

when(col("channel_type") == "dm", col("channel_id"))

).alias("dm_recipients"),

count_distinct(

when(col("channel_type") == "channel", col("channel_id"))

).alias("channels_count"),

count("*").alias("total_messages"),

first("app_name").alias("app_name"),

collect_set("channel_id").alias("channel_ids"),

min("date_create").alias("start_time"),

max("date_create").alias("end_time"),

)

# Alert: more than 3 DM recipients OR any channel messages

result_df = aggregated_df.filter(

(col("dm_recipients") > MIN_DM_RECIPIENTS)

| (col("channels_count") >= 1)

)Disclaimer: Slack does not log user clicks on links (the message contained a link to download a file). Combine this detection with other log sources for complete coverage.

MCP OAuth Scope Expansion on Existing Client (potential PrivEsc)

# Scopes that grant meaningful write or private-read access via MCP (!)

# Exact strings as defined in the Slack OAuth scope reference. These aren't regex and may subject to changes, depending on Slack's team.

# array_intersect enforces exact token equality.

MCP_WRITE_SCOPES = [

"chat:write",

"canvases:write",

"channels:history", # read full private channel history

"groups:history", # read full private group history

"im:history", # read DM history

"mpim:history", # read multi-person DM history

"search:read.private", # search across private content

"files:write",

]

# Non-browser / headless OAuth clients. Scope expansion via these UAs

# by a non-owner is the high-fidelity double-condition indicator.

HEADLESS_UA_PATTERN = r"(?i)(curl|python-requests|python-httpx|go-http-client|okhttp|axios|node-fetch|wget|java\/|ruby|php)"

# org_owners: load from your SCIM or Slack admin API -- user IDs with Owner role.

org_owners_df = spark.table("slack.org_owners") # expected column: user_id

org_owners_list = [r.user_id for r in org_owners_df.collect()]

def detect_mcp_scope_expansion(df):

scope_events = df.filter(col("action") == "app_scopes_expanded")

# Parse details.new_scopes / previous_scopes (JSON array strings) into

# real array columns so array_intersect can do exact-token matching.

# Using rlike on raw strings risks substring false-positives

# e.g. "channels:history" matching inside "channels:history:org".

scope_events = (

scope_events

.withColumn(

"_new_scopes_arr",

from_json(get_json_object(col("details"), "$.new_scopes"), ArrayType(StringType()))

)

.withColumn(

"_prev_scopes_arr",

from_json(get_json_object(col("details"), "$.previous_scopes"), ArrayType(StringType()))

)

)

risky_scope_added = size(array_intersect(

col("_new_scopes_arr"),

lit(MCP_WRITE_SCOPES).cast(ArrayType(StringType()))

)) > 0

is_non_owner = ~get_json_object(col("actor"), "$.user.id").isin(org_owners_list)

is_headless = get_json_object(col("context"), "$.ua").rlike(HEADLESS_UA_PATTERN)

# All three must fire -- this make sure the detection has high fidelity.

risky_expansion = (

scope_events

.filter(risky_scope_added & is_non_owner & is_headless)

.select(

to_timestamp(col("date_create").cast("timestamp")).alias("event_time"),

get_json_object(col("actor"), "$.user.id").alias("actor.user.id"),

get_json_object(col("actor"), "$.user.email").alias("actor.user.email"),

get_json_object(col("entity"), "$.app.id").alias("entity.app.id"),

get_json_object(col("entity"), "$.app.name").alias("entity.app.name"),

get_json_object(col("details"), "$.new_scopes").alias("details.new_scopes"),

get_json_object(col("details"), "$.previous_scopes").alias("details.previous_scopes"),

get_json_object(col("context"), "$.ip_address").alias("context.ip_address"),

get_json_object(col("context"), "$.ua").alias("context.ua"),

get_json_object(col("context"), "$.location.name").alias("context.location.name"),

lit("SLACK_MCP_SCOPE_EXPANSION").alias("detection_name"),

)

)

return risky_expansionBest Practices for Integrating SaaS into Claude

Today, the ability to change the permission scope of integrations, many of which are derived from MCP tools, is largely non-existent. This is by design.

We did find a few relevant feature requests on Anthropic's feedback repository, that may allow us to control direct permissions:

Issue #25566 - describes request for project-level control over which claude.ai connectors are active and options to enable/disable or allowlist specific connectors.

Issue #22301 - describes a settings request to prevent Claude Code from auto-loading cloud MCP connectors, with granular per-connector blocklisting.

Although, to prevent the supply chain compromise from the integrations of tools to Claude Code, the best way would be creating a dedicated application -> provide the application only the specific scope of permissions you'd expect -> connect to Claude.

This immediately resolves this issue and danger that arises from instruction-based files that inject malicious behavior into your agent. It also allows you to gain full control of what the AI agent is permitted to do in your SaaS integrations.

Treat Skills like Software

1. Application-level log. Most AI agents ship with a "skills.log" file that's created and updated with exact timestamps. This is a requirement, not optional. Skills are simply instructions that get translated into JSON for the agent to read, so enabling logging for skills is straightforward. Here's a simple way to turn on invocation logging for skills. This can live in CLAUDE.md preferences (or any other agent preferences) or be embedded directly into each skill, depending on company policy:

## Invocation Logging

After reading this skill, append to /home/claude/skill_log.jsonl:

{"skill": "docx", "ts": "<current_time>", "trigger": "<brief reason>"}NOTE: This is not deterministic. It's a pragmatic workaround to help identify potential malicious skill usage and correlate audit logs with invocation timestamps.

2. Review the instructions! This is simple English most of the time, whether it's JSON or Markdown. Read it. The few minutes you spend reviewing the "code" of a skill will save you hours later and immediately boost confidence and trust.

Treat skills like software. Review them. Log them. Version them.