ShinyHunters have gained recognition in the last few years as very reliable when it comes to compromising environments. So far, their list of compromised companies includes multiple names from the Fortune 500. Most recently it was confirmed that Rockstar, the video game developer behind the Grand Theft Auto series, had been affected after Anodot disclosed a data leak from its Snowflake instance.

This has left many organizations and security teams worried: Will GTA 6 be delayed? But more importantly: Are we covered for a Snowflake breach? Do we collect the right logs? Do we have anomalous detection mechanisms in place? Who's in charge of this?

Before we jump in, let this be a reminder: tactical threat hunting matters.

Timeline: When, What, Where?

On April 4, 2026, Anodot's public status page showed a broad outage, stating that all data collectors were down. The named impact included Snowflake, S3, and Kinesis.

Three days later, on April 7, 2026, BleepingComputer reported more than a dozen companies were hit by data-theft attacks after a SaaS integration provider was breached and authentication tokens were stolen. The supply chain was hit yet again, and major companies were immediately at risk.

It wasn't limited to Snowflake customers. Reports also claim that Salesforce was again targeted and the attackers tried to pivot into Salesforce, too. However, additional reports say the attack was "successfully" detected and access was prevented. Details are murky.

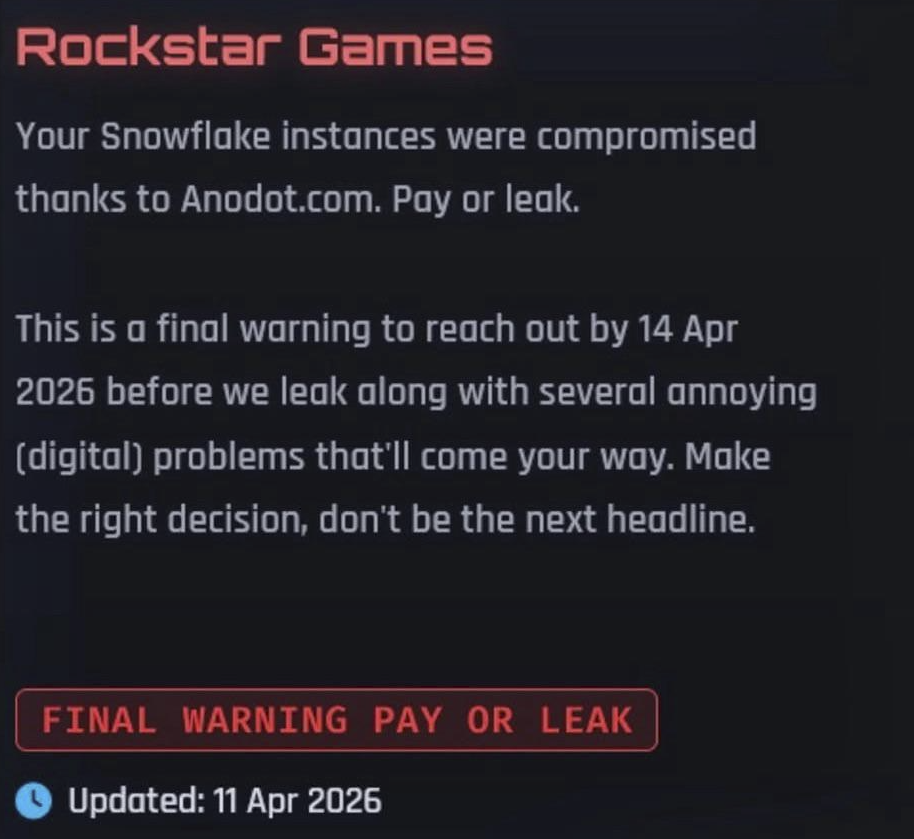

On April 11, 2026, ShinyHunters posted their demands on their dark-web site. Deadline: April 14, 2026.

A day later, on April 12, 2026, Rockstar responded along the lines of "The group claims to have gained access to Rockstar Games' financial data, player spending habits, marketing timelines, and contracts with outsourcing companies." The company added that "a limited amount of non-material company information was accessed in connection with a third-party data breach" and that there was "no impact on our organisation or our players."

What happened to Rockstar?

It's not just Rockstar. More organizations may be impacted today, tomorrow, or in the coming weeks.

When we put the pieces of the puzzle together, the image is still a bit blurred, but the outlines are visible:

ShinyHunters gained access to Anodot, a third-party contractor providing analytics on its clients' collected data. As we've learned from previous incidents, patient zero is almost always abused to continue a chain of compromised organizations. Just like the Drift's incident, ShinyHunters stole authentication tokens from Anodot. Using those tokens, they simply started "selecting" data and exporting it, silently and seamlessly. The company's services, plus Rockstar's, confirms it.

What do we know so far?

From what we know, correlating the publicly available information, patient zero was Anodot. ShinyHunters had unauthorized access, unclear exactly why, when, or how and that access appears to have been both broad and privileged.

This allowed them to collect authentication tokens for service accounts belonging to Anodot's clients. As of now, no public indicators of compromise (IOCs) have been released. However, it's crucial to understand ShinyHunters' commonly observed infrastructure. This includes:

- Untrusted VPN nodes (Mullvad in particular)

- Tor exit nodes

- Unknown Cloud Providers IP addresses

- Known IOCs from incident reports of the last year or so

After collecting those authentication tokens, the group continued compromising additional victims in the supply chain. This, of course, includes Rockstar, using service account tokens deployed in its environment (or a third-party contractor's environment on Rockstar's behalf) to exfiltrate more and more data from other organizations.

Attackers do NOT break in. They LOG in.

Anodot was compromised initially, so the very first intrusion was allegedly a true "break-in." But from that point on, just like in the Drift incident, hundreds of companies were compromised by a group that simply logged in.

How to act today before extortion begins

Before we start acting, let's understand the main vectors that could've caused this:

- Token hygiene: Stolen tokens are dangerous because they can bypass the usual controls that people expect to stop account takeovers, especially service accounts that have long-lived, broadly scoped, not sender-constrained and rarely even monitored (NHI, anyone?).

- Over-trust: A third-party integration is often overlooked. Classic delegated trust problem.

- Visibility: When a trusted connector accesses Snowflake, its traffic can look normal unless you're monitoring for subtle changes. In other words, Collect, Detect, Respond.

Detect it/Hunt it.

- Check whether you use Anodot. If you do, start there. This can save you a lot of time versus doing behavioral analysis on logs that are barely understandable at scale.

- Start with "dry" indicators. Use heuristic, signature-based, and black-and-white IOCs. Focus on the IP addresses and infrastructure already published in incident reports, and cross-reference with the indicators listed above.

- Baseline your service accounts. What are your service accounts actually doing? Are they monitored? Do you know what permissions they have? How "aggressive" do they look in your logs?

Once you have that baseline in place, here are a few detections you can implement in your SIEM immediately. Any LLM can help convert these into your preferred detection language and schema.

Integration Anomalous Login - PySpark

Requires: An anomaly-detection engine, ideally one that provides context for which IP addresses are being used.

from pyspark.sql import functions as sf

_SERVICE_PATTERNS = [

"SVC_", "_SVC", "_SA", "_SERVICE", "_INT", "_BOT",

"FIVETRAN", "ANODOT", "TABLEAU", "LOOKER", "DBT", "AIRBYTE",

"MATILLION", "STITCH", "BOOMI", "MULESOFT", "INFORMATICA", "TRAY",

"WORKATO", "INTEGRATION", "SERVICE_ACCOUNT",

]

def integration_login_anomalous_ip(raw_logins_df, metrics_df=None, date_to_work=None):

if raw_logins_df is None:

return None

# Find usernames that look like service / integration identities / Anodot integration

service_condition = sf.lit(False)

for pattern in _SERVICE_PATTERNS:

service_condition = service_condition | sf.upper(sf.col("USER_NAME")).contains(pattern)

service_logins_df = raw_logins_df.filter(

(sf.col("IS_SUCCESS") == True) & service_condition

)

# Use historical metrics as baseline if available

if metrics_df is not None:

identity_col = "entity" if "entity" in metrics_df.columns else "user_email"

historical_ip_df = (

metrics_df

.filter(sf.col("key") == "CLIENT_IP")

.select(

sf.col(identity_col).alias("USER_NAME"),

sf.col("value").alias("CLIENT_IP"),

)

.distinct()

)

current_df = service_logins_df.filter(sf.col("date") == date_to_work)

novel_df = current_df.join(

sf.broadcast(historical_ip_df),

on=["USER_NAME", "CLIENT_IP"],

how="left_anti",

)

# Otherwise derive baseline from earlier raw events

else:

effective_date = date_to_work or (

service_logins_df.agg(sf.max("date").alias("d")).collect()[0]["d"]

)

current_df = service_logins_df.filter(sf.col("date") == effective_date)

historical_ip_df = (

service_logins_df

.filter(sf.col("date") < effective_date)

.select("USER_NAME", "CLIENT_IP")

.distinct()

)

novel_df = current_df.join(

sf.broadcast(historical_ip_df),

on=["USER_NAME", "CLIENT_IP"],

how="left_anti",

)

# Aggregate first-seen IP activity per user per day

return novel_df.groupBy("USER_NAME", "date").agg(

sf.count("*").alias("login_count"),

sf.array_join(sf.collect_set("CLIENT_IP"), ", ").alias("new_ips"),

sf.first("EVENT_TIMESTAMP").alias("EVENT_TIMESTAMP"),

sf.first("CLIENT_IP").alias("CLIENT_IP"),

)

This would help you find those unrecognized IP addresses for service accounts, which is completely not expected.

Service Account External Stage Creation

from pyspark.sql import functions as sf

_SERVICE_PATTERNS = [

"SVC_", "_SVC", "_SA", "_SERVICE", "_INT", "_BOT",

"FIVETRAN", "ANODOT", "TABLEAU", "LOOKER", "DBT", "AIRBYTE",

"MATILLION", "STITCH", "BOOMI", "MULESOFT", "INFORMATICA", "TRAY",

"WORKATO", "INTEGRATION", "SERVICE_ACCOUNT",

]

_EXTERNAL_URL_PATTERN = r"(?i)(s3://|gcs://|azure://|wasbs?://|abfss?://|https://)"

_STAGE_URL_PATTERN = r"(?i)(?:url\s*=\s*['\"]?)(s3://[^\s'\"]+|gcs://[^\s'\"]+|azure://[^\s'\"]+|wasbs?://[^\s'\"]+|abfss?://[^\s'\"]+|https://[^\s'\"]+)"

def service_account_external_stage_creation(raw_df):

if raw_df is None:

return None

# Identify service / integration accounts

service_condition = sf.lit(False)

for pattern in _SERVICE_PATTERNS:

service_condition = service_condition | sf.upper(sf.col("USER_NAME")).contains(pattern)

# Detect CREATE STAGE statements pointing to external storage

result_df = (

raw_df

.filter(sf.col("QUERY_TYPE") == "CREATE_STAGE")

.filter(sf.col("QUERY_TEXT").rlike(_EXTERNAL_URL_PATTERN))

.filter(service_condition)

.withColumn(

"destination_cloud",

sf.when(sf.upper(sf.col("QUERY_TEXT")).contains("S3://"), sf.lit("AWS"))

.when(sf.upper(sf.col("QUERY_TEXT")).contains("GCS://"), sf.lit("GCP"))

.when(

sf.upper(sf.col("QUERY_TEXT")).contains("AZURE://")

| sf.upper(sf.col("QUERY_TEXT")).contains("WASB://")

| sf.upper(sf.col("QUERY_TEXT")).contains("ABFSS://"),

sf.lit("AZURE"),

)

.when(sf.upper(sf.col("QUERY_TEXT")).contains("HTTPS://"), sf.lit("WEB"))

.otherwise(sf.lit("UNKNOWN")),

)

.withColumn(

"stage_url",

sf.regexp_extract(sf.col("QUERY_TEXT"), _STAGE_URL_PATTERN, 1)

)

)

return result_df

These events are notable because external stages are commonly used as a prerequisite for moving data out of Snowflake, meaning this activity can indicate potential data exfiltration setup.

Database Reconnaissance Burst with New Session

from pyspark.sql import functions as sf

_RECON_QUERY_TYPES = {

"SHOW_DATABASES",

"SHOW_SCHEMAS",

"SHOW_TABLES",

"SHOW_COLUMNS",

"SHOW_VIEWS",

"SHOW_OBJECTS",

}

_RECON_TEXT_PATTERNS = [

"INFORMATION_SCHEMA.TABLES",

"INFORMATION_SCHEMA.COLUMNS",

"INFORMATION_SCHEMA.SCHEMATA",

"INFORMATION_SCHEMA.VIEWS",

"SHOW DATABASES",

"SHOW SCHEMAS",

"SHOW TABLES",

"SHOW COLUMNS",

"SHOW VIEWS",

"SHOW OBJECTS",

]

_MIN_DISTINCT_RECON_TYPES = 3

_SESSION_WINDOW_MINUTES = 5

def recon_burst_new_session(raw_df):

if raw_df is None:

return None

# 1) Identify reconnaissance queries (by type or text)

recon_type_condition = sf.col("QUERY_TYPE").isin(_RECON_QUERY_TYPES)

text_condition = sf.lit(False)

for pattern in _RECON_TEXT_PATTERNS:

text_condition |= sf.upper(sf.col("QUERY_TEXT")).contains(pattern)

recon_df = raw_df.filter(recon_type_condition | text_condition)

# 2) Find session start time (first query in session)

session_start_df = recon_df.groupBy("SESSION_ID", "USER_NAME").agg(

sf.min("START_TIME").alias("session_start")

)

# 3) Keep only queries within first N minutes of session

windowed_df = (

recon_df

.join(session_start_df, on=["SESSION_ID", "USER_NAME"])

.filter(

sf.unix_timestamp("START_TIME") <=

sf.unix_timestamp("session_start") + (_SESSION_WINDOW_MINUTES * 60)

)

)

# 4) Count distinct recon query types per session

result_df = windowed_df.groupBy("SESSION_ID", "USER_NAME").agg(

sf.count("*").alias("recon_query_count"),

sf.collect_set("QUERY_TYPE").alias("recon_query_types"),

)

# 5) Keep only sessions with enough diversity (burst behavior)

result_df = result_df.filter(

sf.size("recon_query_types") >= _MIN_DISTINCT_RECON_TYPES

)

return result_df

This one, a strong one, indicates sessions where a user rapidly performs database reconnaissance immediately after starting a session.

Tactical threat hunting matters because by the time ShinyHunters posts a deadline, it's already too late.

Start threat hunting now.

.jpg)